You can use other transformations such rotation, cropping, zooming, and translation. By creating copies of your duck images and flipping them horizontally, you have doubled the training examples for the “duck” class. This is called “data augmentation.”įor example, say you have twenty images of ducks in your image classification dataset. One of the ways to increase the diversity of the training dataset is to create copies of the existing data and make small modifications to them. This challenge becomes even more difficult in supervised learning applications where training examples must be labeled by human experts. However, gathering extra training examples can be expensive, time-consuming, or sometimes impossible. On the other hand, if a CNN is trained on images of objects from different angles and under different lighting conditions, it will become more robust at identifying them in the real world.

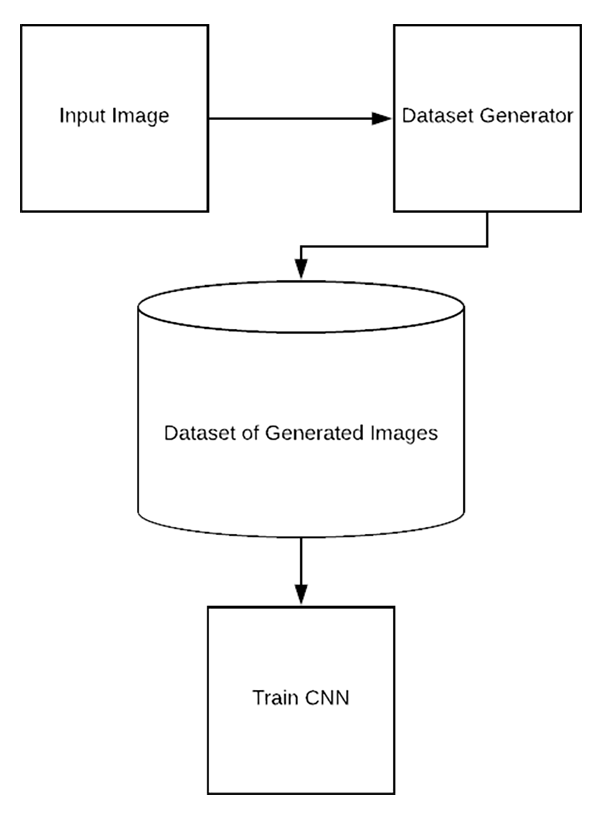

Without a large and diverse set of training examples, a CNN will end up misclassifying images in the real world. But ultimately, the main remedy to overfitting is adding more quality data to the training dataset.įor example, consider the convolutional neural network ( CNN), a type of machine learning architecture that is especially good for image classification tasks. There are several ways to avoid overfitting in machine learning, such as choosing different algorithms, modifying the model’s architecture, and adjusting hyperparameters. When machine learning models are trained on limited examples, they tend to “overfit.” Overfitting happens when an ML model performs accurately on its training examples but fails to generalize to unseen data. Data augmentation is a low-cost and effective method to improve the performance and accuracy of machine learning models in data-constrained environments. One solution to this problem is “data augmentation,” a technique that generates new training examples from existing ones. Unfortunately, for many applications, access to quality data remains a barrier. Machine learning models can perform wonderful things-if they have enough training data.

Keras data augmentation with large dataset series#

This article is part of Demystifying AI, a series of posts that (try to) disambiguate the jargon and myths surrounding AI.